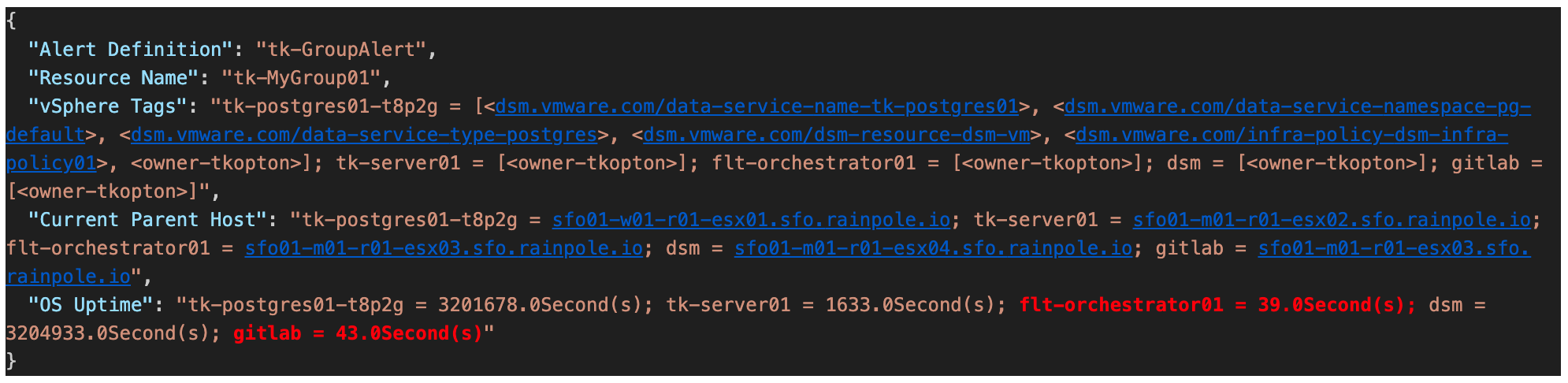

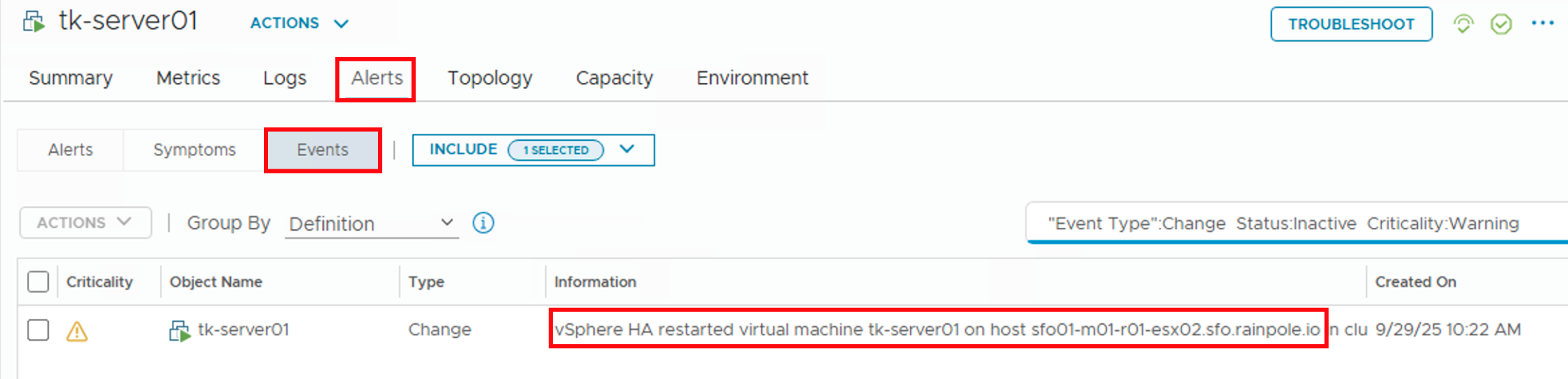

In my previous post (https://thomas-kopton.de/vblog/?p=2198), I detailed how to track HA-induced Virtual Machine restarts in VCF Operations, including the required symptoms, alert definitions, and REST Webhook notifications. However, in larger setups, with potentially over 20 VMs per ESX host, receiving 20 individual notifications is impractical. This simply creates alert fatigue and undermines the entire alerting …

Tag: vRealize Operations

Monitoring HA VM Restarts in VCF Operations

Someone recently asked me if there’s a straightforward way to notify Virtual Machine owners as soon as their VMs were affected by an HA Event. Apparently, suitable alarm definitions are not available out-of-the-box in VCF Operations, and the customer asked for a solution. Scenario The scenario is therefore quite simple: First Approach Initially, I considered …

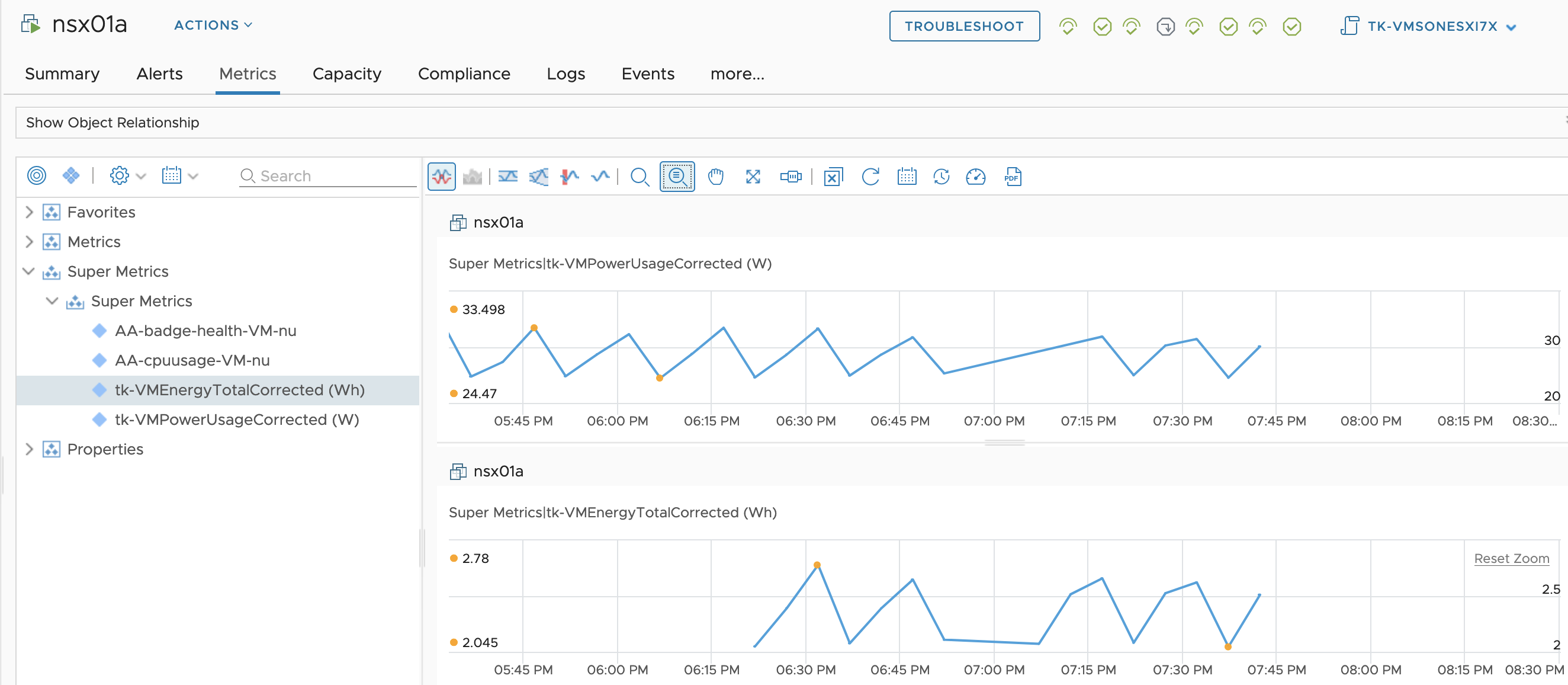

Fixing “Virtual Machine Power Metrics Display in mW” using Aria Operations

In the VMware Aria Operations 8.6 (previously known as vRealize Operations), VMware introduced pioneering sustainability dashboards designed to display the amount of carbon emissions conserved through compute virtualization. Additionally, these dashboards offer insights into reducing the carbon footprint by identifying and optimizing idle workloads. This progress was taken even further with the introduction of Sustainability …

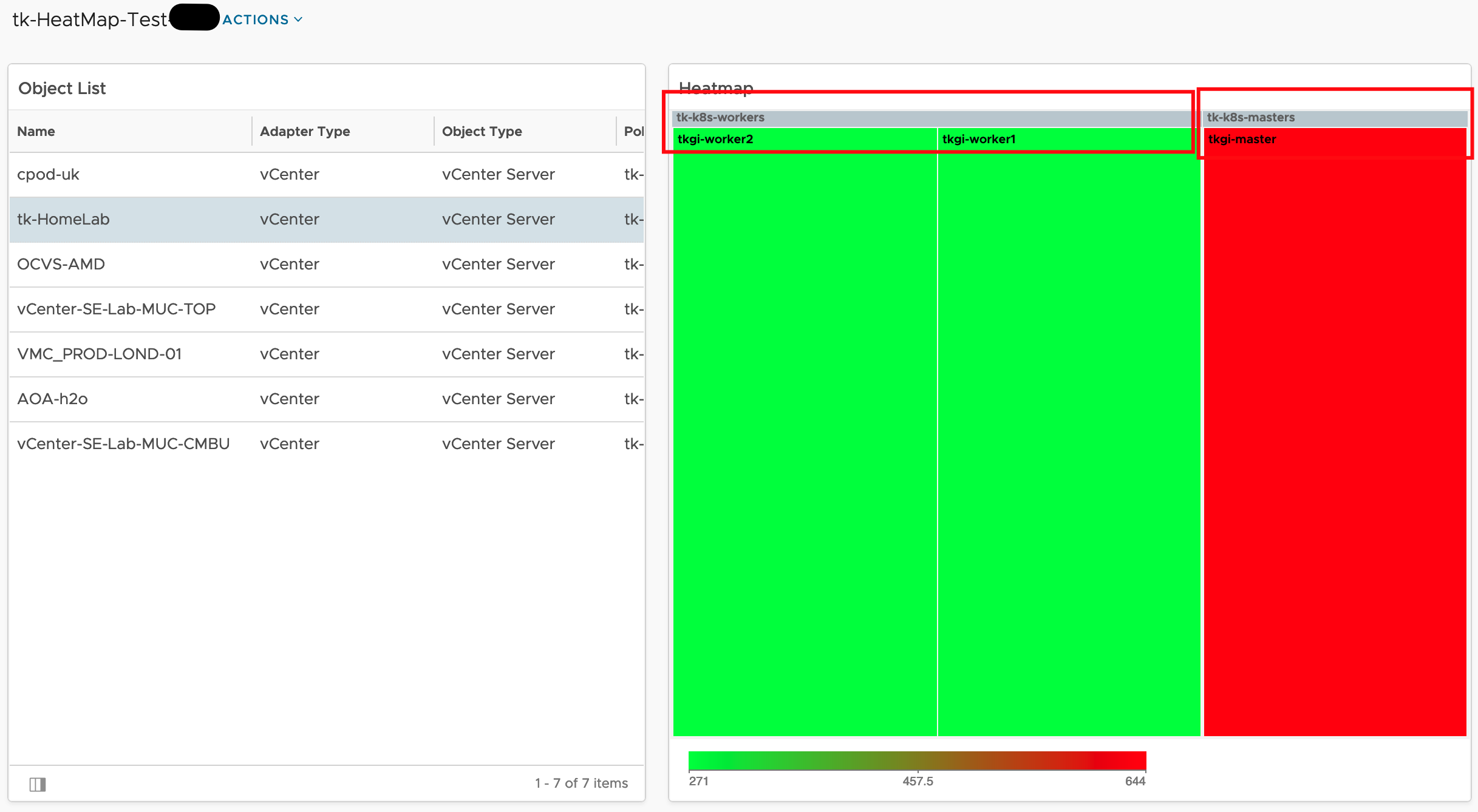

Aria Operations Heatmap Widget meets Custom Groups – Grouping by Property

The Aria Operations Heatmap Widget which is very often used in many Dashboards provides the possibility of grouping objects within the Heatmap by other related object types, like in the following screenshot showing Virtual Machines grouped by their respective vSphere Cluster. Problem description This is a great feature but the grouping works only using object …

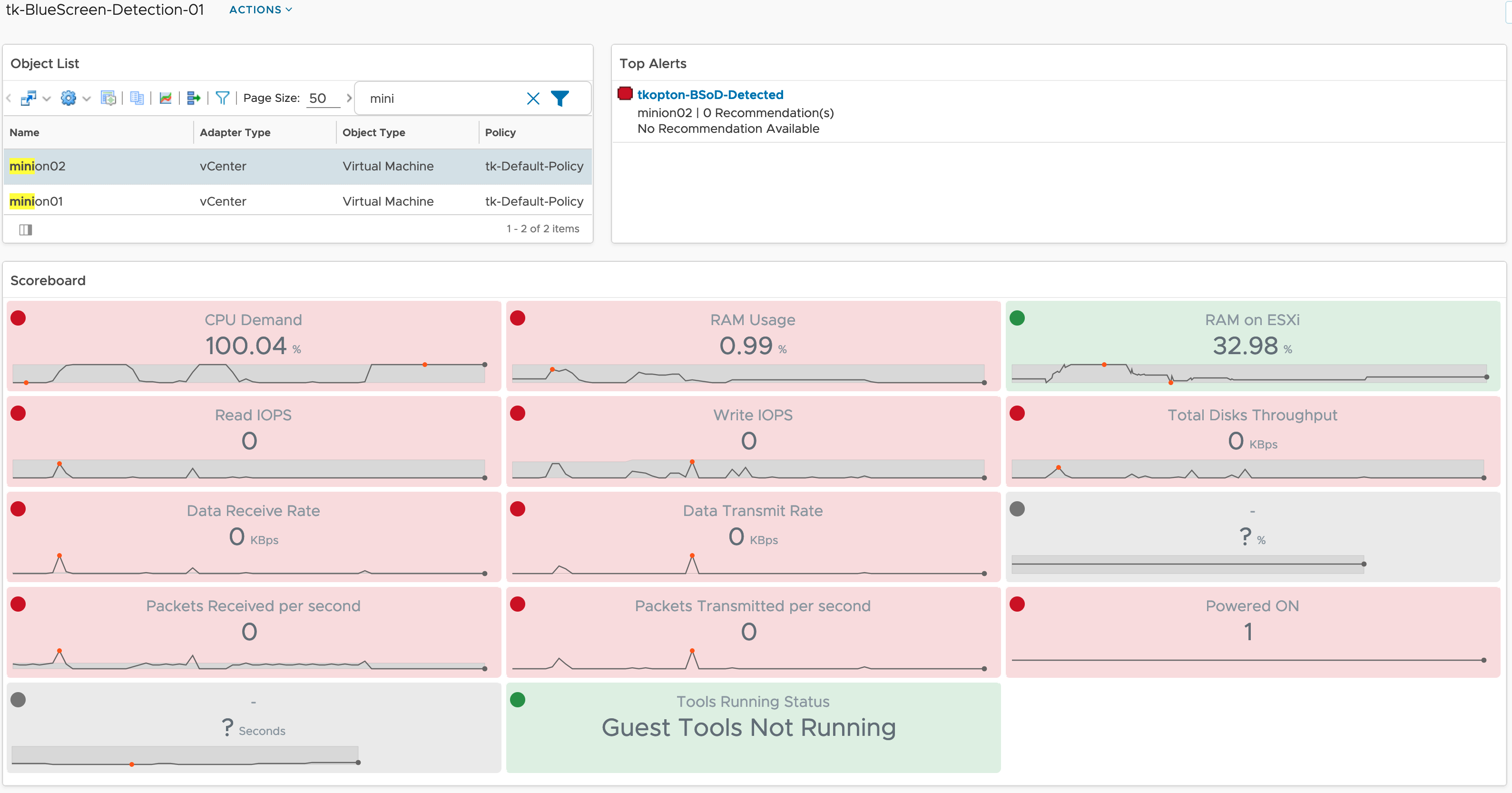

How to detect Windows Blue Screen of Death using VMware Aria Operations

Problem statement Recently I was asked by a customer what would be the best way to get alerted by VMware Aria Operations when a Windows VM stopped because of a Blue Screen of Death (BSoD) or a Linux machine suddenly quit working due to a Kernel Panic. Even if it looks like a piece of …

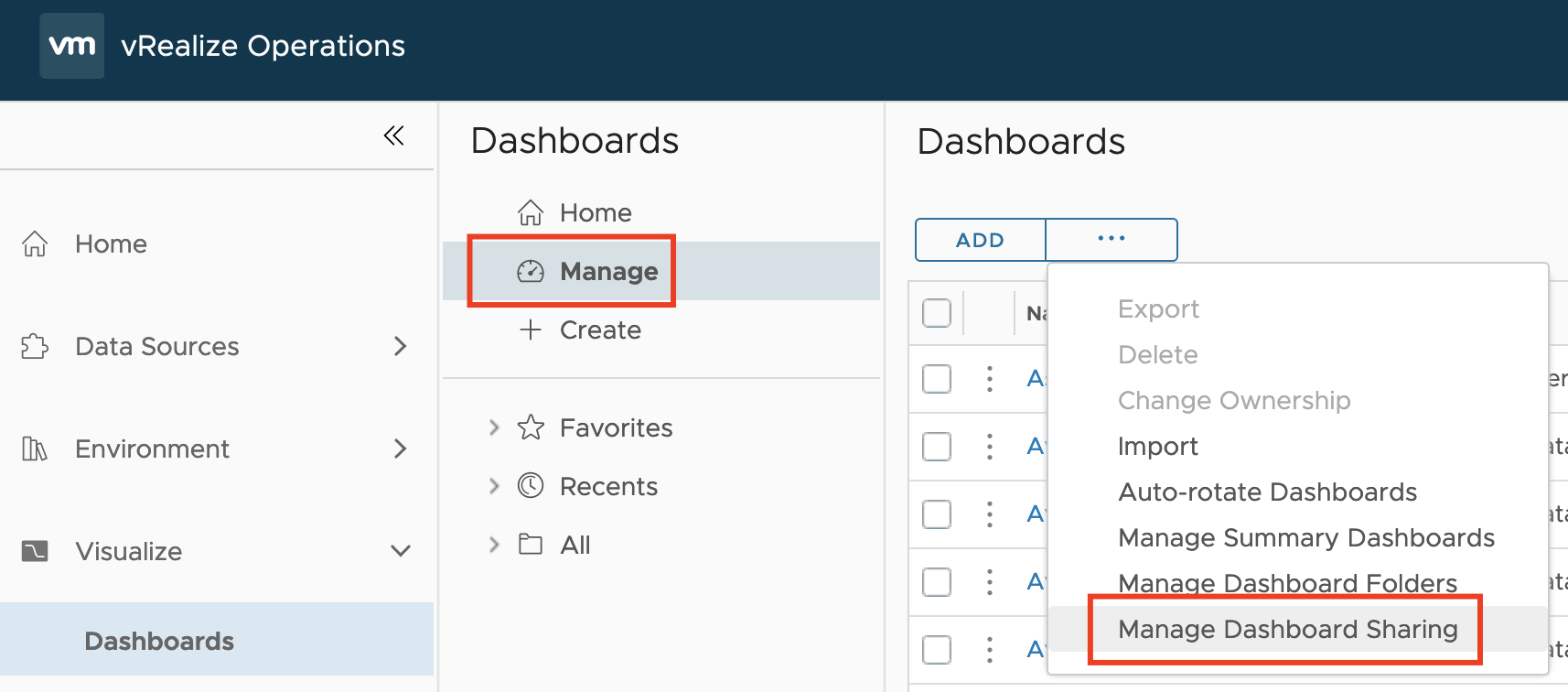

VMware Explore Follow-up 2 – Aria Operations Dashboard Permissions Management

Another question I was asked during my “Meet the Expert – Creating Custom Dashboards” session which I could not answer due to the limited time was: “How to manage access permissions to Aria Operations Dashboards in a way that will allow only specific group of content admins to edit only specific group of dashboards?“ Even …

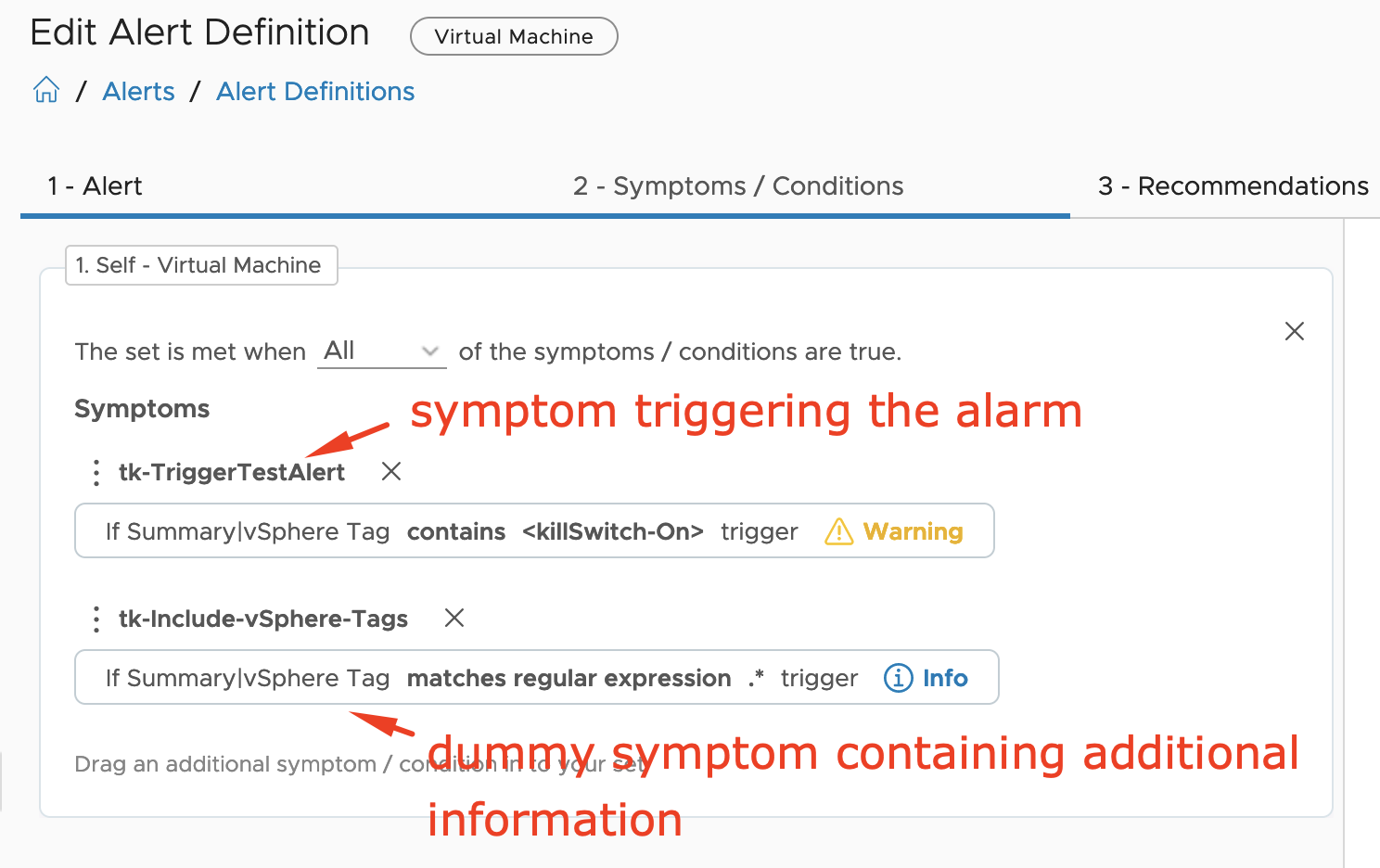

VMware Explore Follow-up – Aria Operations and SNMP Traps

During one of my “Meet the Expert” sessions this year in Barcelona I was asked if there is an easy way to use SNMP traps as Aria Operations Notification and let the SNMP trap receiver decide what to do with the trap based on included information except for the alert definition, object type or object …

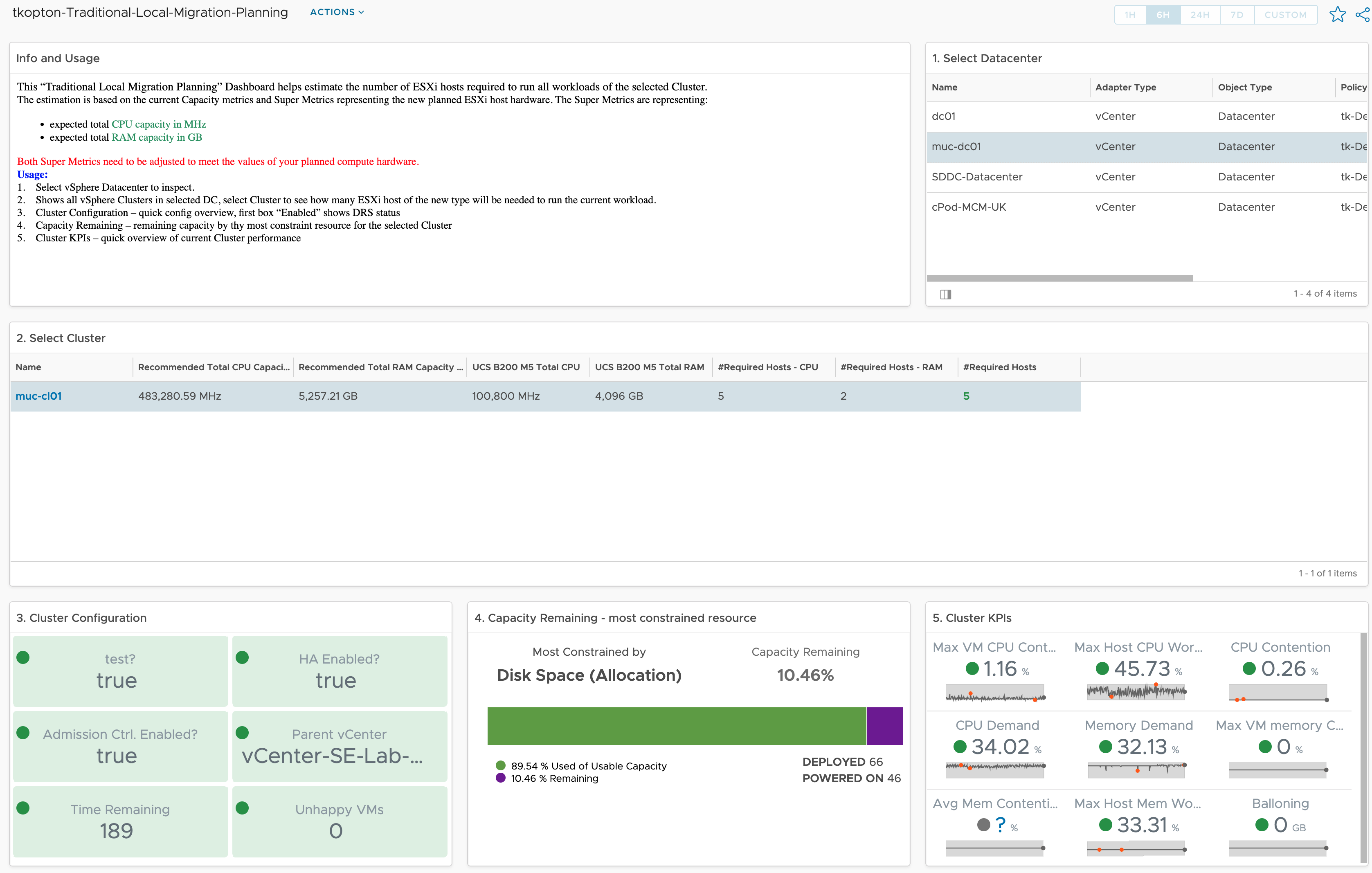

How vRealize Operations helps size new vSphere Clusters

In ESXi Cluster (non-HCI) Rightsizing using vRealize Operations I have described how to use vRealize Operations and the numbers calculated by the Capacity Engine to estimate the number of ESXi hosts which might be moved to other clusters or decommissioned. The corresponding dashboard is available via VMware Code. In this post, I describe the opposite …

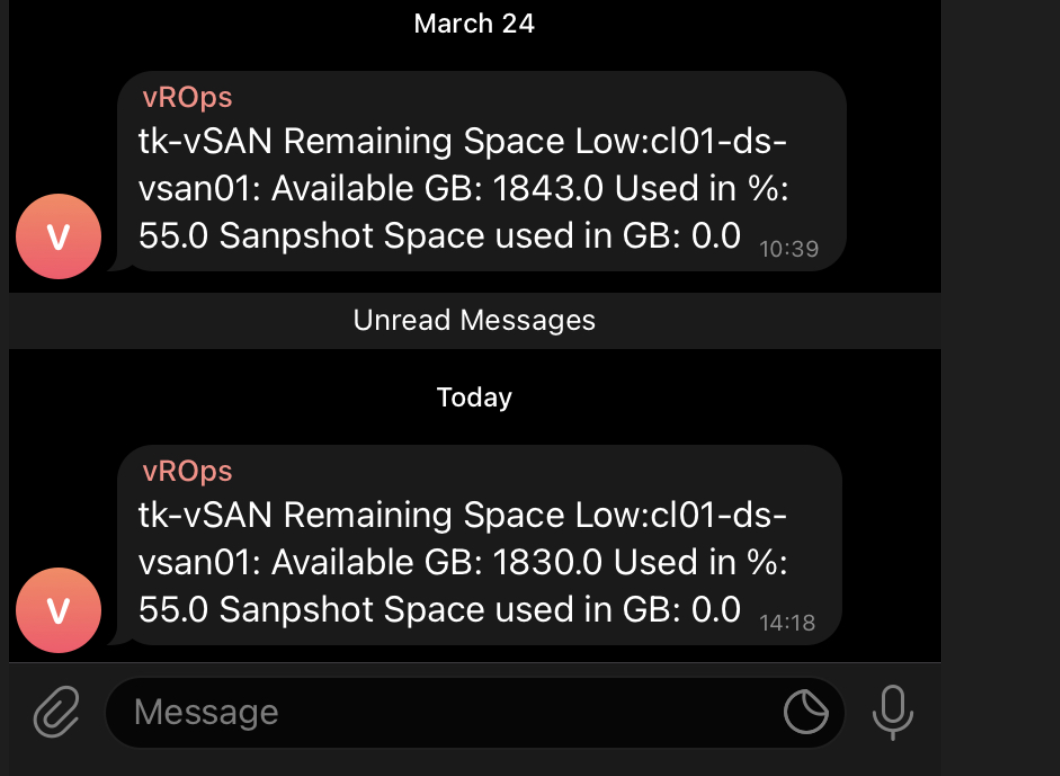

vRealize Operations and Telegram – Alerts on your Phone

As you all know vRealize Operations is a perfect tool to manage and monitor your SDDC and in case of issues vROps creates alerts and informs you as quickly as possible providing many details related to an alert. Without any additional customization, vRealize Operations displays the alerts in the Alerts tab. Sending alerts via email …

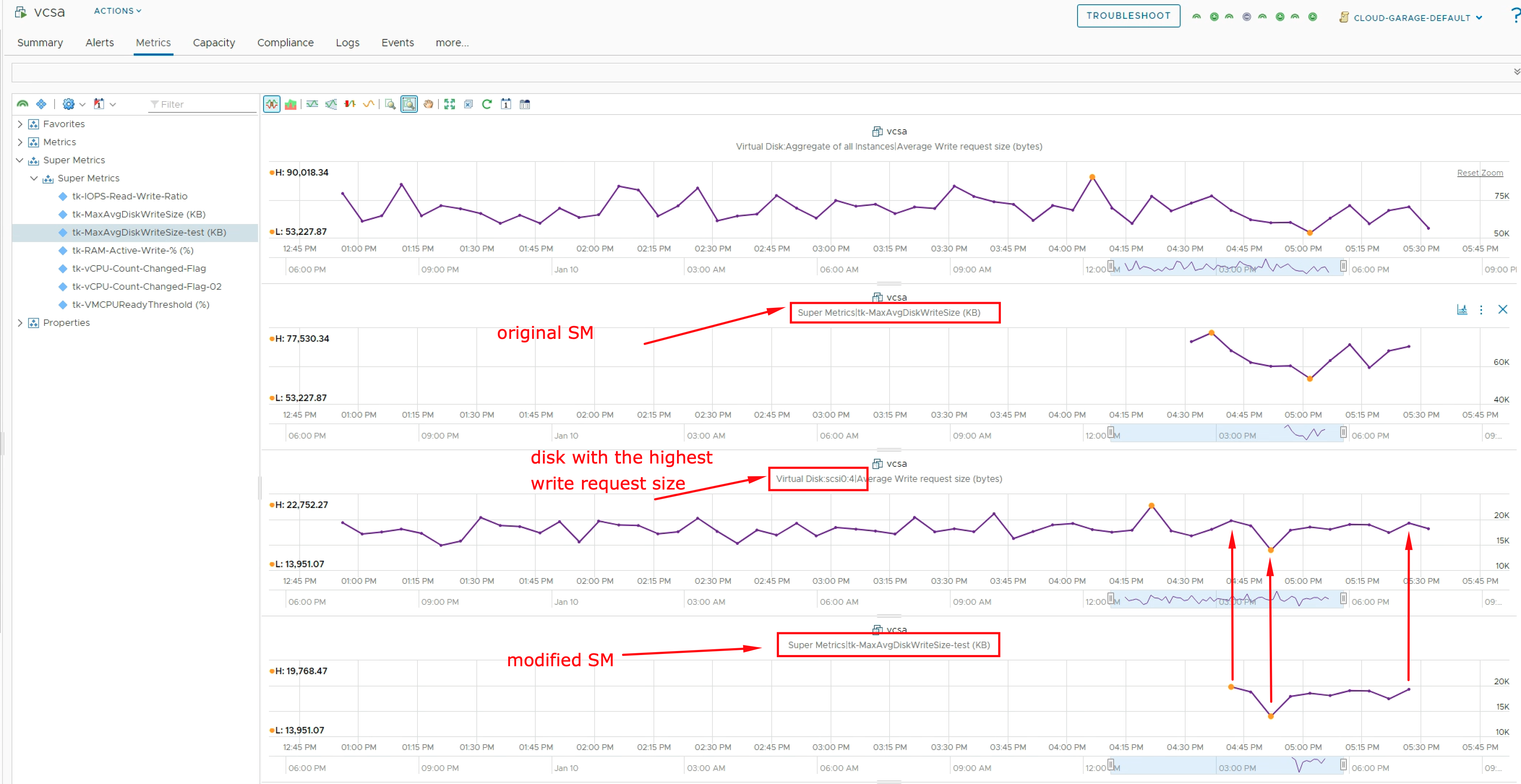

Exclude “Aggregate” Instanced Metric using vRealize Operations Super Metric

As you know vRealize Operations is collecting tons of various metrics. Some of these metrics are so-called “Instanced Metrics” and disabled in the default configuration in newer vROps versions. A list of disabled instanced metrics for e.g. Virtual Machine object type is available here: https://docs.vmware.com/en/vRealize-Operations/8.6/com.vmware.vcom.metrics.doc/GUID-1322F5A4-DA1D-481F-BBEA-99B228E96AF2.html#disabled-instanced-metrics-18 If you need any of those metrics, you can enable …