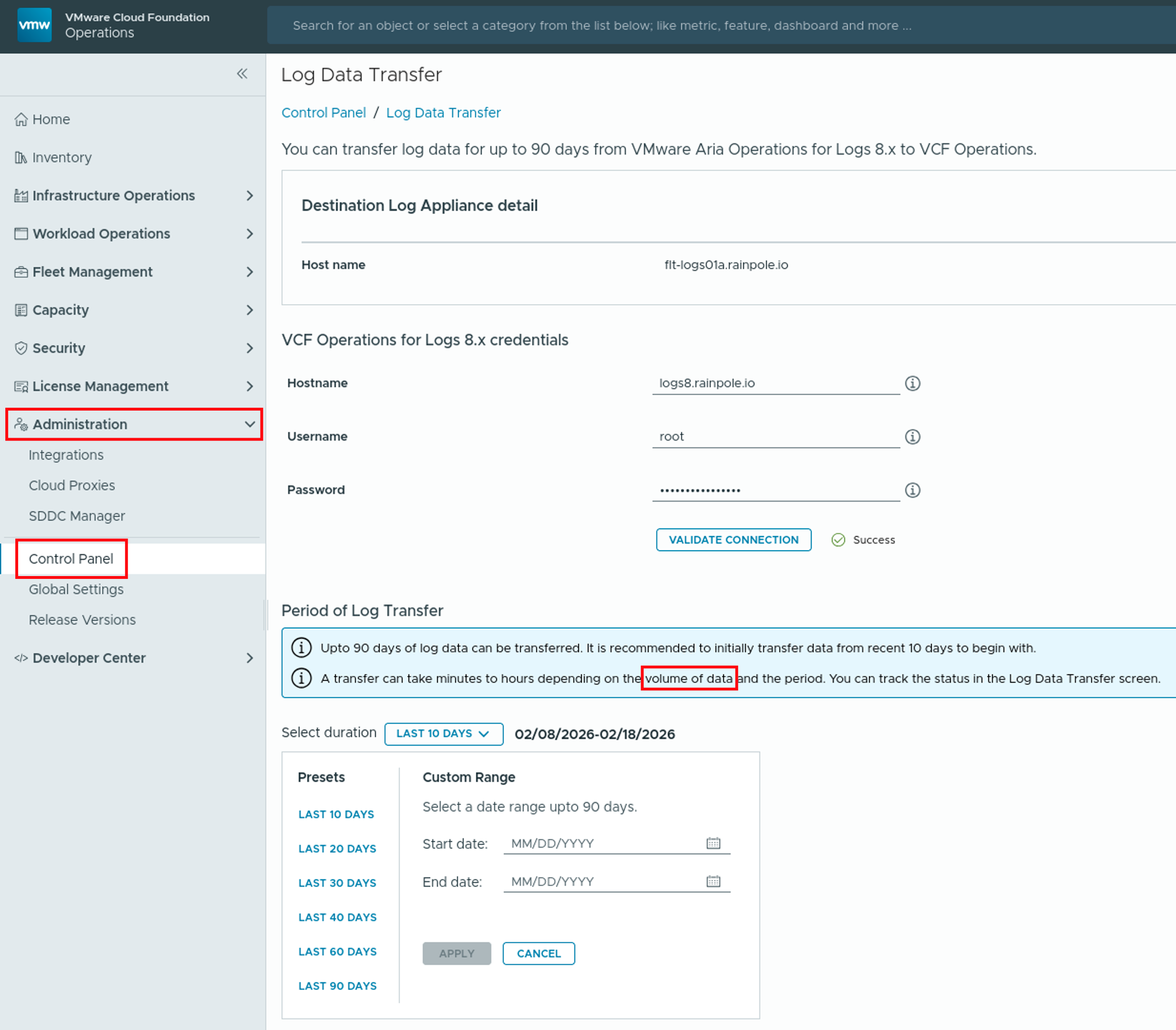

Introduction to Log Data Transfer in VCF Operations 9 With the release of VMware Cloud Foundation (VCF) 9, Logs is now more integrated into Operations. Moving from Aria Operations for Logs 8.18.x to VCF 9 does not support a direct, in-place upgrade, instead, administrators must perform a fresh deployment of the 9.0 appliance. This deployment …

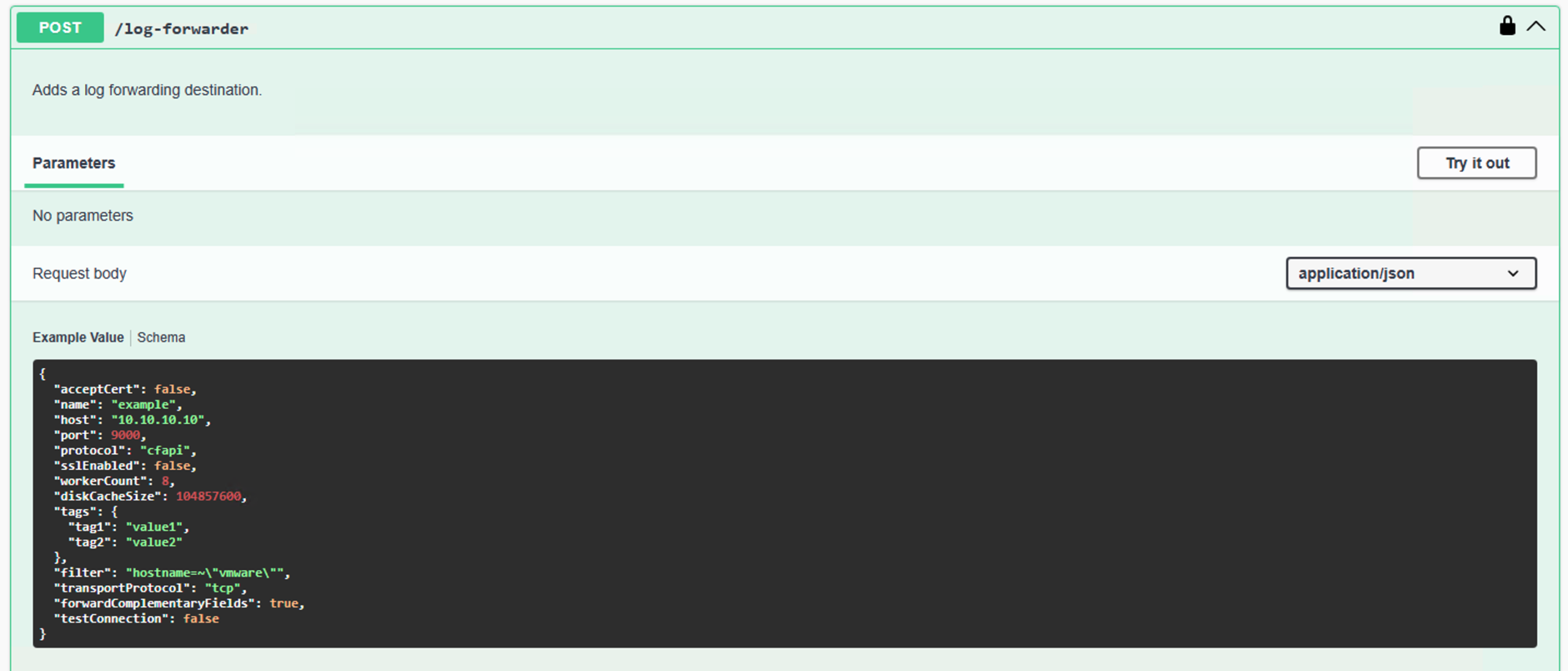

Migrating Content and Config from Aria Operations for Logs 8.18 to VCF Operations for Logs 9

Introduction As organizations modernize their Private Clouds with VMware Cloud Foundation 9, the logging infrastructure undergoes a significant evolution. Transitioning from VMware Aria Operations for Logs 8.18.x (formerly vRealize Log Insight) to the new VCF Operations for Logs 9 is designed as a side-by-side migration requiring a fresh deployment. While there is a fully supported …

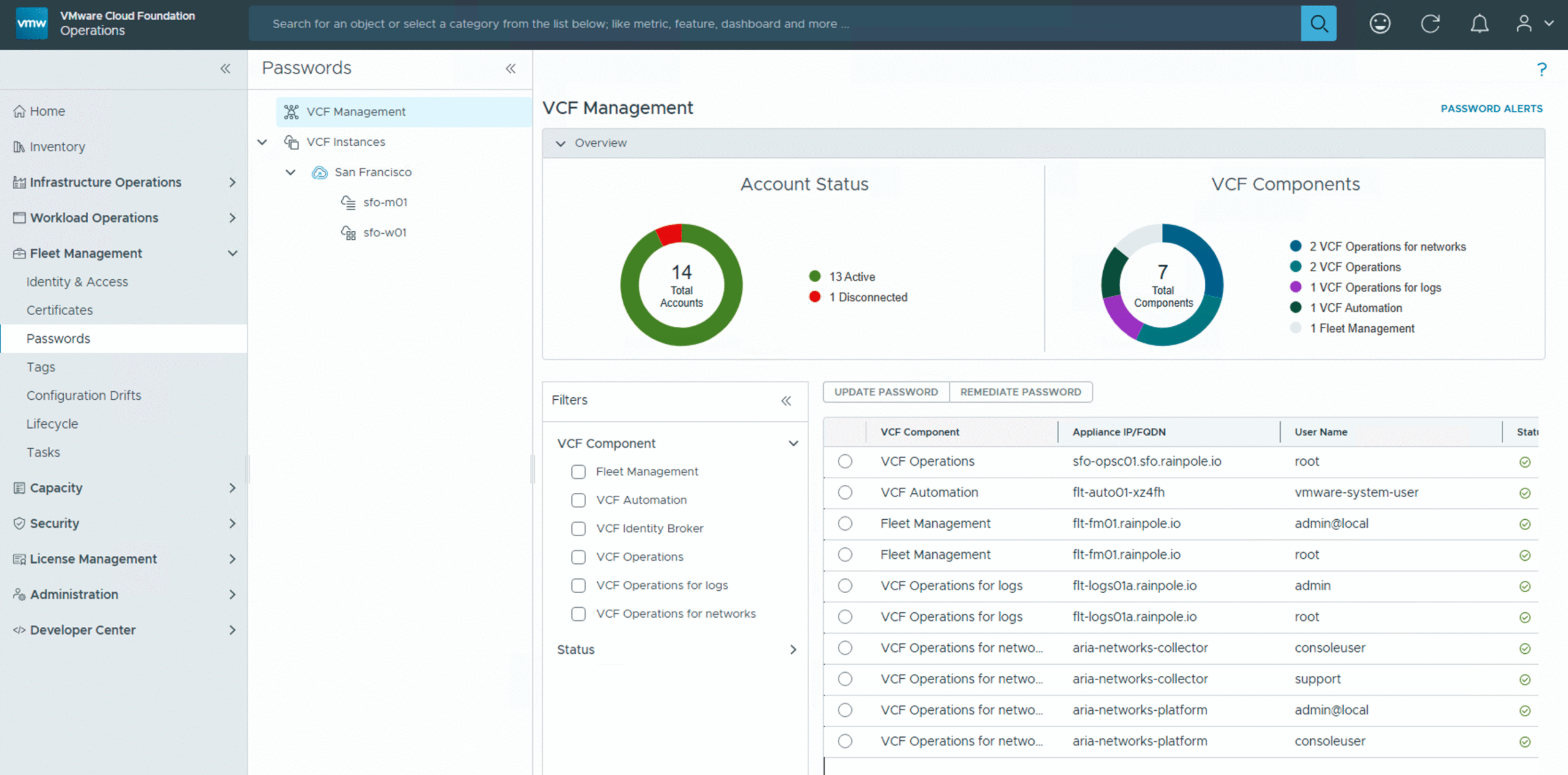

Retrieve Passwords from VCF Fleet Manager: VCF Operations Cloud Proxy Example

One of the great things about an all-encompassing Private Cloud solution like VMware Cloud Foundation (VCF) is how much it automates for you. From automatically installing or updating VCF Operations Cloud Proxies to managing critical passwords like the root user’s within the VCF Fleet Manager, VCF aims to streamline your operations. But what happens when …

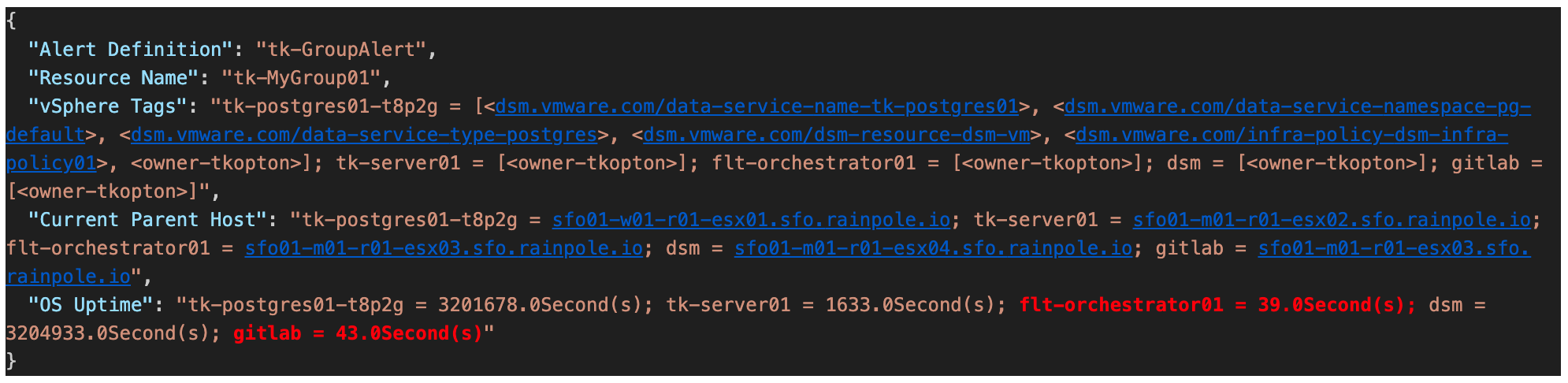

Reducing Alert Noise for VM Groups in VCF Operations

In my previous post (https://thomas-kopton.de/vblog/?p=2198), I detailed how to track HA-induced Virtual Machine restarts in VCF Operations, including the required symptoms, alert definitions, and REST Webhook notifications. However, in larger setups, with potentially over 20 VMs per ESX host, receiving 20 individual notifications is impractical. This simply creates alert fatigue and undermines the entire alerting …

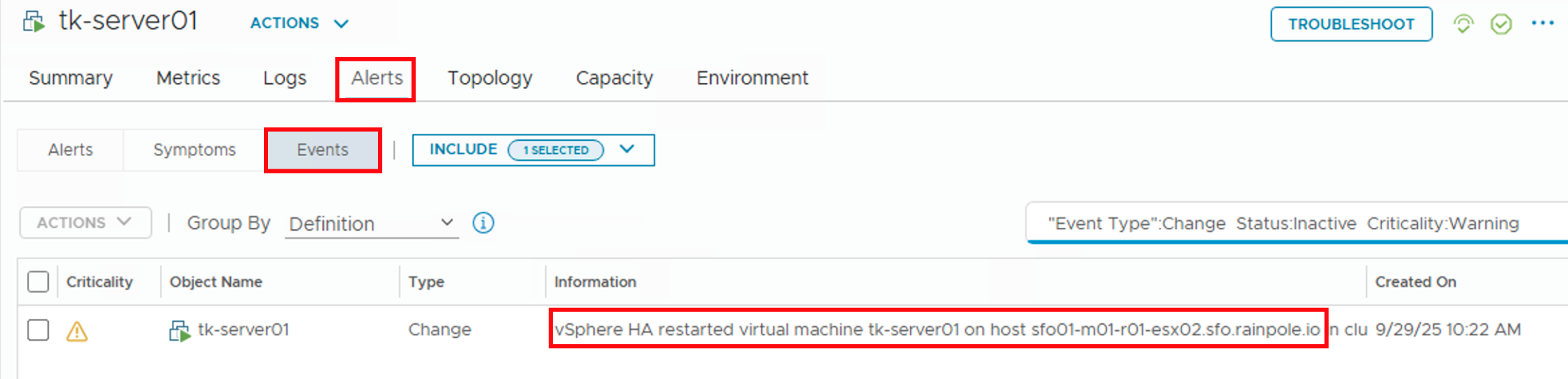

Monitoring HA VM Restarts in VCF Operations

Someone recently asked me if there’s a straightforward way to notify Virtual Machine owners as soon as their VMs were affected by an HA Event. Apparently, suitable alarm definitions are not available out-of-the-box in VCF Operations, and the customer asked for a solution. Scenario The scenario is therefore quite simple: First Approach Initially, I considered …

Audit Events in VMware Aria Operations

In my previous blog post, I described how to capture and visualize both successful and, more importantly, failed login attempts in NSX environment using Aria Operations for Logs dashboards. With the release of Aria Operations 8.18, VMware has made it even easier to monitor such audit events in vSphere components directly within Aria Operations itself, thanks …

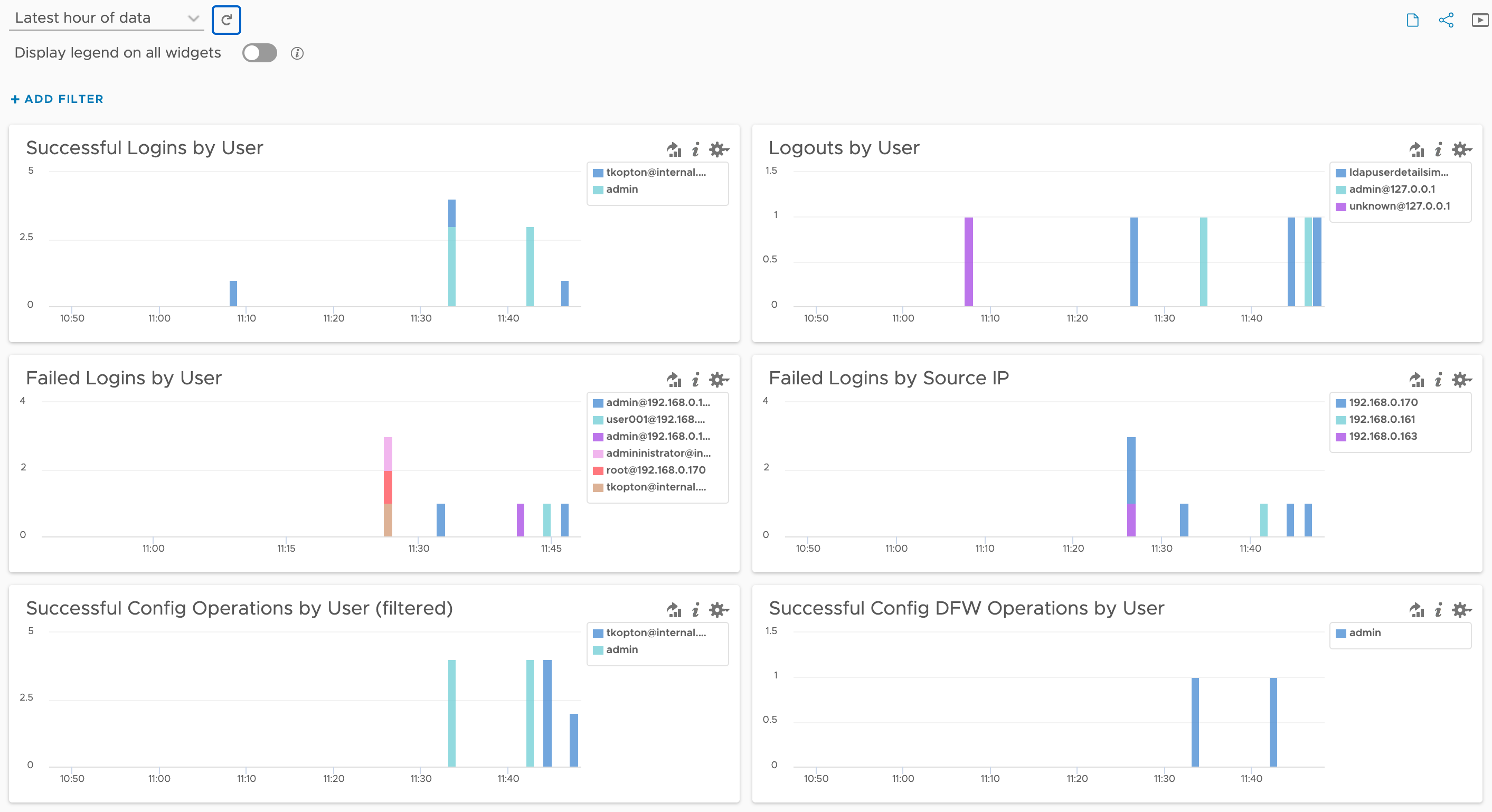

NSX User Ops Audit using Aria Operations for Logs

Recently, a customer asked me if it’s possible to monitor or retrospectively see which user performed specific actions in NSX using the tools available in the VMware Cloud Foundation (VCF) stack — essentially, a typical user actions audit for NSX. Although we don’t have an exact match in the current Aria Operations for Logs NSX …

VMware vSphere Kubernetes Service 3.3 is now GA…

VMware vSphere Kubernetes Service 3.3 is now GA… We are excited to announce the general availability of VMware vSphere Kubernetes Service (VKS) 3.3, formerly known as VMware Tanzu Kubernetes Grid (TKG) Service, alongside vSphere Kubernetes release (VKr) 1.32, previously referred to as Tanzu Kubernetes release. Broadcom Social Media Advocacy

VMware Aria Operations Integration SDK – Part 2 – Demo Project

In the first post on the topic of VMware Operations Integration SDK, I showed how I prepared my development environment and how a demo project is created using the triad of mp-init, mp-test, and mp-build. In this post, we’ll take a closer look at which files a simple, REST API-based project consists of, as well …

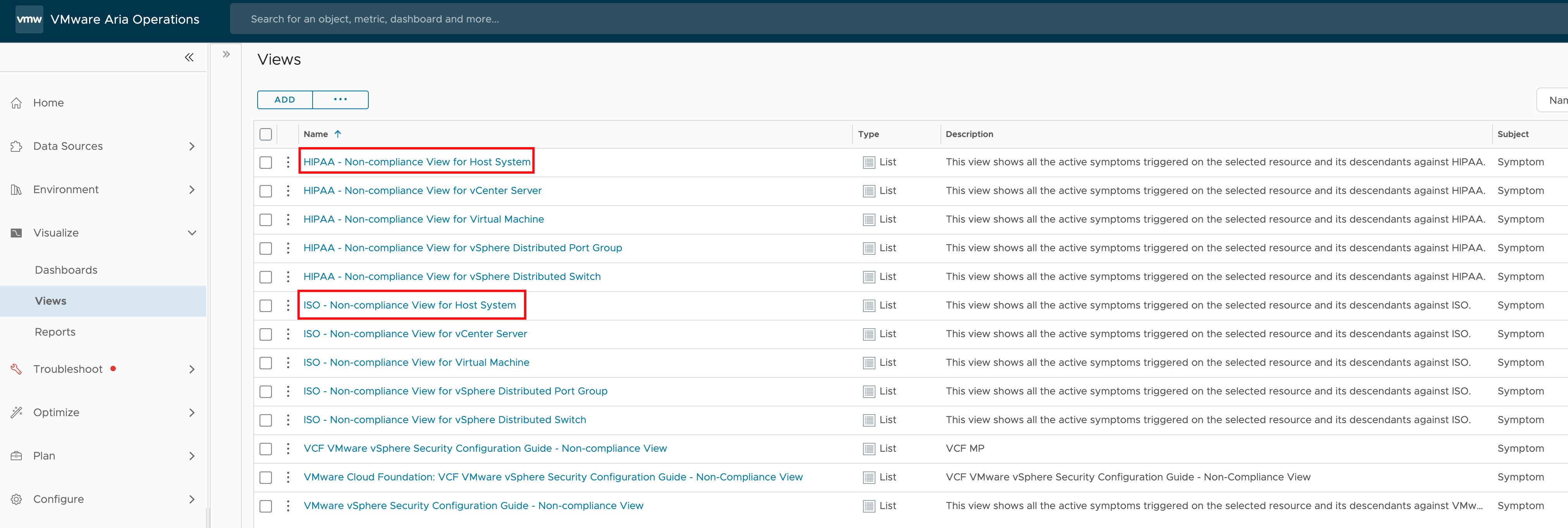

Programmatically Accessing VMware Aria Operations Compliance Check Results

Recently, I was asked if there’s a way to programmatically read the results of a compliance check for specific ESXi hosts in VMware Aria Operations. The use case here is that the customer wants to utilize these compliance results in an automated workflow. This is an interesting question that highlights the growing need for automation …